TL;DR: In this post, we build a microservice that uses Azure Functions and other awesome Serverless technologies provided by Azure. We will cover the following features:

- Azure functions currently has support for TypeScript in preview and we will be using the current features available to develop a read/write REST API.

- We leverage the Azure Function Bindings to define Input and Output for our functions.

- We will look at Azure Function Proxies that provide a way to define consistent routing behavior for our function and API calls.

If you want to jump in; the source is available on GitHub here (https://github.com/niksacdev/sample-api-typescript).

TypeScript support for Azure Functions is in preview state as of now; please use caution when using these in your production scenarios.

Problem Context

We will be building a Vehicle microservice which provides CRUD operations for sending vehicle data to a CosmosDB document store.

The architecture is fairly straightforward and looks like this:

Let’s get started …

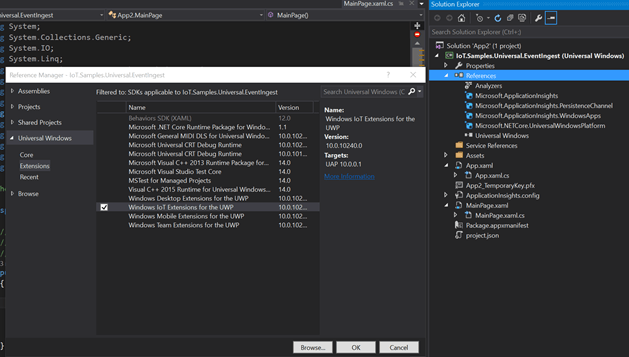

Setting up TypeScript support for Azure Functions

VSCode has amazingly seamless support for Azure Functions and TypeScript including a development, linting, debugging, and deployment extension, so it was a no-brainer to use that for our development. I use the following extensions:

Additionally, you will need the following to kick-start your environment:

- azure-function-core-tools: You would need these for setting up the function runtime in your local development. There are two packages here, and if you are using a Mac environment like me, you will need the 2.0 preview version.

npm install -g azure-functions-core-tools@core - Node.js (duh!): Note that the preview features currently works with

8.x.x. I have tried it on8.9.4which is the latest LTS (Latest LTS: Carbon), so you may have to downgrade using nvm if you are using the 9.X.X versions.

Interestingly, the Node version supported by Functions deployed in Azure is v6.5.0 so while you can locally play with higher versions you will have to downgrade to 6.5.0 when deploying to Azure as of today!

You can now use the Function Runtime commands or the Extension UI to create your project and Functions. We will use the Extension UI for our development:

Assuming you have installed the extension and connected to your Azure environment, the first thing we do is create a Function project.

Click on Create New Project and then select the folder that will contain our Function App.

The extension creates a bunch of files required for the FunctionApp to work. One of the key files here is host.json which allows you to specify configuration for the Function App. If you are creating HTTPTriggers, some settings that I would recommend tuning to improve your throttling and performance parameters:

{

"functionTimeout": "00:10:00",

"http": {

"routePrefix": "api/vehicle",

"maxOutstandingRequests": 20,

"maxConcurrentRequests": 10,

"dynamicThrottlesEnabled": false

},

"logger": {

"categoryFilter": {

"defaultLevel": "Information",

"categoryLevels": {

"Host": "Error",

"Function": "Error",

"Host.Aggregator": "Information"

}

}

}

}

The maxOutstandingRequests can be used to control latency for the function by setting a threshold limit on the max request in waiting and execution queue. The maxConcurrentRequests allows control over concurrent http function requests to optimize resource consumption. The functionTimeOut is useful if you would want to override the timeout settings for the AppService or Consumption Plan which default limit of 5 minutes. Note that configuration in host.json are applied to all functions.

Also note that I have a custom value for route attribute (by default this is api/{functioname}). By adding the prefix, I am specifying that all HTTP functions in this FunctionApp will use the api/vehicle route. This is a good way to set the bounded context for your Microservice since the route will now be applied to all functions in this FunctionApp. You can also use this to define versioning schemes when doing canary testing. This setting can be used in conjunction with the route attribute in a function function.json, the Function Runtime appends your Function route with this default Host route.

Note that this behaviour can be simplified using Azure Function Proxies, we will modify these routes and explore more later in the Azure Function Proxies section.

To know more options available in

host.json, refer here

Our project is now created, next, we create our Function.

Click Create Function and follow the onscreen instructions to create the function in the same folder as the Function App.

- Since TypeScript is in preview, you will notice a

(Preview)tag when selecting the language. This was a feature added in a new build for the extension, if you don’t see TypeScript as the language option, you can enable support for preview languages using the VSCode settings page, specify the following in your user settings:

“azureFunctions.projectLanguage": "TypeScript”

The above will use TypeScript as the default language and will skip the language selection dialog when creating a Function.

- Select the

HTTP Triggerfor our API and then provide a Function Name. - Select

AuthorizationasAnonymous.

Never use

Anonymouswhen deploying to Azure

You should now have a function created with some boilerplate TypeScript code:

- The

function.jsondefines the configuration for your function including the Triggers and Bindings; theIndex.tsis our TypeScript Function Handler. Since TypeScript is a transpiler, your Function needs the output.jsfiles for deployment and not the.tsfile. A common practice is to move these output files into a different directory so you don’t accidentally check them in. However, if you move them to a different folder and run the function locally you may get the following error:

vehicle-api: Unable to determine the primary function script. Try renaming your entry point script to 'run' (or 'index' in the caseof Node), or alternatively you can specify the name of the entry point script explicitly by adding a 'scriptFile' property to your function metadata.

To allow using a different folder, add a scriptFile attribute to your function.json and provide a relative path to the output folder.

Make sure to add the destination folder to

.gitignoreto ensure the output.jsand.js.mapfiles are not checked in.

"scriptFile": "../vehicle-api-output-debug/index.js"

- The one thing that does not get added by default is a

tsconfig.jsonandtslint.json. While the function will execute without these, I always feel that having these as part of the base setup helps in better coding practices. Also, since we are going to use Node packages, we will add apackages.jsonand install the TypeScript definitions for node

npm install @types/node —save-dev

- We now have our Function and FunctionApp created, but there is one last step required before proceeding, setting up the debug environment. At this time, VSCode does not provide support for debugging Azure Functions written in TypeScript. However, you can enable support for TypeScript fairly easily. I came across this blog from

Tsuyoshi Ushiothat describes exactly how to do it.

Now that we have all things running, let’s focus on what our functions are going to do.

Building our Vehicle API

Developing the API is no different from your usual TypeScript development. From a Function perspective, we will split each operation into a Function. There is a huge debate whether you should have a monolith function API or a per operation (GET, POST, PUT, DELETE) API. Both approaches work, but I feel that within a FunctionApp you should try to segregate the service as much as possible, this is to align with the Single Responsibility Principle. Also, in some cases, you may achieve better scale by implementing a pattern like CQRS where your read and write operations go to separate functions. On the flip side, too many small Functions can become a management overhead, so you need to find the right balance. Azure Function Proxies provide a way to surface multiple endpoints using a consistent routing behavior, we will leverage this for our API in the discussion below.

In a nutshell, a FunctionApp is a Bounded Context for the Microservice, each Function is an operation exposed by that Microservice.

For our Vehicle API we will create two functions:

- vehicle-api-get

- vehicle-api-post

You can also create a Put, Delete similarly.

So, how do we make sure that each API is called only for the designated REST operation? You can define this in the function.json using the methods array.

For example, the vehicle-api-get is a HTTP GET operation and will be configured as below:

{

"authLevel": "anonymous", --DONT DO THIS

"type": "httpTrigger",

"direction": "in",

"name": "req",

"route":"",

"methods": [

"get"

]

},

Adding CosmosDB support to our Vehicle API

The following TypeScript code allows us to access a CosmosDB store and retrieve data based on a Vehicle Id. This represents the HTTP GET operation for our Vehicle API.

import { Collection } from "documentdb-typescript";

export async function run(context: any, req: any) {

context.log("Entering GET operation for the Vehicle API.");

// get the vehicle id from url

const id: number = req.params.id;

// get cosmos db details and collection

const url = process.env.COSMOS_DB_HOSTURL;

const key = process.env.COSMOS_DB_KEY;

const coll = await new Collection(process.env.COSMOS_DB_COLLECTION_NAME, process.env.COSMOS_DB_NAME, url, key).openOrCreateDatabaseAsync();

if (id !== 0) {

// invoke type to get id information from cosmos

const allDocs = await coll.queryDocuments(

{

query: "select * from vehicle v where v.id = @id",

parameters: [{name: "@id", value: id }]

},

{enableCrossPartitionQuery: true, maxItemCount: 10}).toArray();

// build the response

context.res = {

body: allDocs

};

} else {

context.res = {

status: 400,

body: `$"No records found for the id: {id}"`

};

}

// context.done();

}

Using Bindings with CosmosDB

While the previous section used code to perform the GET operation, we can also use Bindings for CosmosDB that will allow us to perform operations on our CosmosDB Collection whenever the HTTP Trigger is fired. Below is how the HTTP POST is configured to leverage the Binding with CosmosDB:

{

"disabled": false,

"scriptFile": "../vehicle-api-output-debug/vehicle-api-post/index.js",

"bindings": [

{

"authLevel": "anonymous", --DONT DO THIS

"type": "httpTrigger",

"direction": "in",

"name": "req",

"route": "data",

"methods": [

"post"

]

},

{

"type": "documentDB",

"name": "$return",

"databaseName": "vehiclelog",

"collectionName": "vehicle",

"createIfNotExists": false,

"connection": "COSMOS_DB_CONNECTIONSTRING",

"direction": "out"

}

]

}

Then in your code, you can simply return the incoming JSON request and Azure Function takes care of pushing the values into CosmosDB.

export function run(context: any, req: any): void {

context.log("HTTP trigger for POST operation.");

let err;

let json;

if (req.body !== undefined) {

json = JSON.stringify(req.body);

} else {

err = {

status: 400,

body: "Please pass the Vehicle data in the request body"

};

}

context.done(err, json);

}

OneClick deployment to Azure using VSCode Extensions

Deployment to Azure from the VSCode extension is straightforward. The interface allows you to create a FunctionApp in Azure and then provides a step by step workflow to deploy your functions into the FunctionApp.

If all goes well, you should see output such as below.

Using Subscription "".

Using resource group "".

Using storage account "".

Creating new Function App "sample-vehicle-api-azfunc"...

>>>>>> Created new Function App "sample-vehicle-api-azfunc": https://<your-url>.azurewebsites.net <<<<<<

00:27:52 sample-vehicle-api-azfunc: Creating zip package...

00:27:59 sample-vehicle-api-azfunc: Starting deployment...

00:28:06 sample-vehicle-api-azfunc: Fetching changes.

00:28:14 sample-vehicle-api-azfunc: Running deployment command...

00:28:20 sample-vehicle-api-azfunc: Running deployment command...

00:28:26 sample-vehicle-api-azfunc: Running deployment command...

00:28:31 sample-vehicle-api-azfunc: Running deployment command...

00:28:37 sample-vehicle-api-azfunc: Running deployment command...

00:28:43 sample-vehicle-api-azfunc: Running deployment command...

00:28:49 sample-vehicle-api-azfunc: Running deployment command...

00:28:55 sample-vehicle-api-azfunc: Running deployment command...

00:29:00 sample-vehicle-api-azfunc: Running deployment command...

00:29:06 sample-vehicle-api-azfunc: Running deployment command...

00:29:12 sample-vehicle-api-azfunc: Running deployment command...

00:29:17 sample-vehicle-api-azfunc: Running deployment command...

00:29:24 sample-vehicle-api-azfunc: Syncing 1 function triggers with payload size 144 bytes successful.

>>>>>> Deployment to "sample-vehicle-api-azfunc" completed. <<<<<<

HTTP Trigger Urls:

vehicle-api-get: https://sample-vehicle-api-azfunc.azurewebsites.net/api/vehicle-api-get

Some observations:

- The extension bundles everything in the App folder including files like

local.settings.jsonand the output.jsdirectories, I could not find a way to filter these using the extension. - Another problem that I have faced is that currently neither the extension or the CLI provides a way to upload Application Settings as Environment Variable so they can be accessed by code once deployed to Azure, so these have to be manually added to make things work. For this sample, you will need to add the following key-value pairs in the FunctionApp -> Application Settings added through the Azure Portal so they can be available as Environment Variables!

"COSMOS_DB_HOSTURL": "https://your cosmos-url:443/",

"COSMOS_DB_KEY": "your-key",

"COSMOS_DB_NAME":"your-db-name",

"COSMOS_DB_COLLECTION_NAME":"your-collection-name"

"COSMOS_DB_CONNECTIONSTRING":"your-connection-string"

- If you are only running it locally, you can use the

local.settings.json, there is also a way through CLI to publish the local settings values into Azure using the--publish-local-settingsflag, but hey there is a reason these are local values! - The Node version supported by Azure Functions is v6.5.0 so while you can locally play with higher versions, you will have to downgrade to 6.5.0 as of today.

In case you guys have a better way to deploy to Azure, do let me know :).

Configuring Azure Function Proxies for our API

At this point, we have a working API available in Azure. We have leveraged the CQRS approach (loosely) to have a separate Read API and a separate Write API, to the client, however, maintaining code with multiple endpoints can quickly become cumbersome. We need a way to package our API into a facade that is consistent and manageable, this is where Azure Function Proxies comes in.

Azure Function Proxies is a toolkit available as part of the Azure Function stack and provide the following features.:

- Building consistent routing behavior for underlying functions in the FunctionApp and can even include external endpoints.

- Provides a mechanism to aggregate underlying apis into a single API facade. In a way, it is a lightweight Gateway service to your underlying Functions.

- Provide a MockUp Proxy to test your endpoint without having integration points. This is useful when testing the request routing with dummy data.

- One of the key aspects added to Proxies is support for OpenAPI which allows more out of box connectors to other services.

- Support for Out of Box AppInsights support where a proxy can publish events to AppInsights to generate endpoint metrics for not just functions but also for legacy API’s.

If you are familiar with the Application Request Routing (ARR) stack in IIS, this is somewhat similar. In fact, if you look at the Headers and Cookies for the request processed by the Proxy, you should see some familiar attributes 😉

...... Server →Microsoft-IIS/10.0 X-Powered-By →ASP.NET ...... Cookies: ARRAffinity

Let’s use Function Proxies for our API.

In the previous sections, I showed how we could use the routePrefix in host.json in conjunction with route in function.json. While that approach works, we have to add configuration for each function which can become a maintenance overhead. Additionally, if I want an external API to have the same route path that will not be possible using the earlier approach. Proxies can help overcome this barrier.

Using proxies, we can develop logical endpoints while keeping the configuration centralized. We will use Azure Function Proxies to surface our two functions as a consistent API Endpoint, so essentially to the client, it will look like a single API interface.

Before we continue, we will remove the route attributes we added to our functions and only keep the variable references and change the routeprefix to just "". Our published Function Endpoint(s) now should look something like this:

Http Functions:

vehicle-api-get: https://sample-vehicle-api-azfunc.azurewebsites.net/{id}

vehicle-api-post:https://sample-vehicle-api-azfunc.azurewebsites.net/vehicle-api-post/

This is obviously not intuitive, with multiple Functions it can become a nightmare for the client to implement our Service. We create two Proxies that will define the route path and match criteria for our Functions. You can easily create proxies from the Azure UI Portal, but you can also create your proxy.json. The below shows how to define proxies and associate with our Functions.

{

"$schema": "http://json.schemastore.org/proxies",

"proxies": {

"VehicleAPI-Get": {

"matchCondition": {

"route": "api/vehicle/{id}",

"methods": [

"GET"

]

},

"backendUri": "https://sample-vehicle-api-azfunc.azurewebsites.net/{id}"

},

"VehicleAPI-POST": {

"matchCondition": {

"route": "/api/vehicle",

"methods": [

"POST"

]

},

"backendUri": "https://sample-vehicle-api-azfunc.azurewebsites.net/vehicle-api-post"

}

}

}

As of today, there is no upload

proxy.jsonfunctionality in Azure but you can easily copy paste into the Portal Advanced Editor.

We have two proxies defined here. The first is for our GET operation and the other for POST. In both cases, we have been able to define a consistent routing mechanism for selected REST verbs. The key attributes here are the route and backendUri which allows us to map a public route to an underlying endpoint. Note that the backendUri can be anything that needs to be called under the same API facade, so we can club multiple services through a common gateway routing using this approach.

Can you do this with other Services, I would have to say, Yes. You can implement similar routing functionality with Application Gateway, NGINX and Azure API Management. You can also use an MVC framework like Express and write a single function that can do all this routing. So, evaluate the options and choose that works best for your scenario.

Testing our Vehicle API

We now have our Vehicle API endpoints exposed through Azure Function Proxies. We can test it using any HTTP Client. I use Postman for the requests, but you can use any of your favorite clients.

GET Operation

The exposed endpoint from the Proxy is:

https://sample-vehicle-api-azfunc.azurewebsites.net/api/vehicle/{id}

Our GET request fetches the correct results from CosmosDB

POST Operation

The exposed endpoint from the Proxy is:

https://sample-vehicle-api-azfunc.azurewebsites.net/api/vehicle/

Our POST request pushes a new record into CosmosDB:

There we have it. Our Vehicle API that leverages Azure Function Proxies and TypeScript is now up and running!

Do have a look at the source code here (https://github.com/niksacdev/sample-api-typescript) and please provide your feedback.

Happy Coding :).